Key Takeaways: The Shift to Autonomous SEO

- Cost & Efficiency: AI-powered SEO agents reduce per-article costs by 40-90% and accelerate production by 46% compared to traditional agency models.

- Core Technology: Success relies on the "Algorithmic Trinity": Large Language Models (creation), Knowledge Graphs (accuracy), and Search Systems (distribution).

- Strategic Pivot: Move from manual keyword targeting to autonomous workflows where specialized agents handle research, writing, and compliance.

- Immediate Action: Audit your existing content library; typically 70-80% fails to appear in AI answers, representing a massive capture opportunity.

AI-Powered SEO Agents: How They Work & Why It Matters

What AI-Powered SEO Agents Actually Are

From Keywords to Autonomous Workflows

The SEO landscape has shifted dramatically from manual keyword targeting to the adoption of ai-powered SEO agents. Traditionally, SEO teams would identify keywords, write content, and manually optimize pages for ranking. In contrast, modern ai-powered SEO agents operate on an autonomous workflow model.

From Keywords to Autonomous Workflows

From Keywords to Autonomous Workflows

Before implementing this technology, assess your current readiness against these key shifts:

- Does your current SEO process still focus primarily on keyword ranking?

- Have you mapped content to support answer engine and generative engine optimization?

- Are multi-step workflows (research, writing, fact-checking) handled by separate agents or automated systems?

- Is there active monitoring for AI-generated content quality and compliance?

These agents—software entities that can perceive their environment and take actions to achieve specific SEO goals—leverage large language models, knowledge graphs, and integrations with tools like Google Analytics and content management systems to automate complex, multi-stage processes with minimal human oversight4.

This approach works best when an organization needs to scale content production efficiently and cost-effectively. For example, companies deploying these agents report content creation speeds up to 46% faster and editing cycles 32% shorter, all while reducing per-article costs by 40-90% compared to traditional agency models1, 12. The autonomy of these systems allows for continuous optimization, from topic ideation through to publication and performance monitoring.

The transition also requires new resource allocations: organizations need technical staff for agent configuration and oversight, and robust governance frameworks to address challenges such as AI hallucinations and compliance risk. The time investment to set up autonomous workflows can range from several weeks for small businesses to several months for enterprise environments, depending on integration complexity and existing infrastructure4.

Understanding this evolution sets the stage for analyzing the algorithmic foundations—large language models, knowledge graphs, and search systems—that underpin these agents.

The Algorithmic Trinity Explained

The core of ai-powered SEO agents relies on three interlinked technologies: large language models (LLMs), knowledge graphs, and modern search systems. To determine which component addresses your specific needs, consider the following decision matrix:

| Your Primary Need | Required Technology |

|---|---|

| Advanced content generation and natural language understanding | Large Language Models (LLMs) |

| Factual accuracy and entity linking | Knowledge Graphs |

| Optimizing for how search engines deliver answers and traffic | Search Systems |

Large language models, such as GPT-4 or Gemini, are advanced AI systems trained on massive text datasets. They can generate human-like content, answer questions, and understand nuanced user intent at scale. This capability is essential for producing high-quality, contextually relevant SEO content on demand8.

Knowledge graphs act as structured databases that map relationships between entities (people, places, concepts). By leveraging knowledge graphs, AI agents enhance factual accuracy, support disambiguation, and connect information across topics—crucial for answer engine optimization (AEO), where search engines extract and cite precise facts9.

Search systems, including platforms like Google and Bing, now use both LLMs and knowledge graphs to power features such as AI Overviews. These systems determine which content is surfaced, cited, or summarized for users, making their algorithms a decisive factor in traffic acquisition and brand visibility5.

This algorithmic trinity allows ai-powered SEO agents to move beyond simple keyword matching and automate everything from content ideation to answer engine placement. This path makes sense for organizations aiming to future-proof their SEO strategies as zero-click and AI-generated answers become the norm.

Why Search Behavior Shifted to AI Platforms

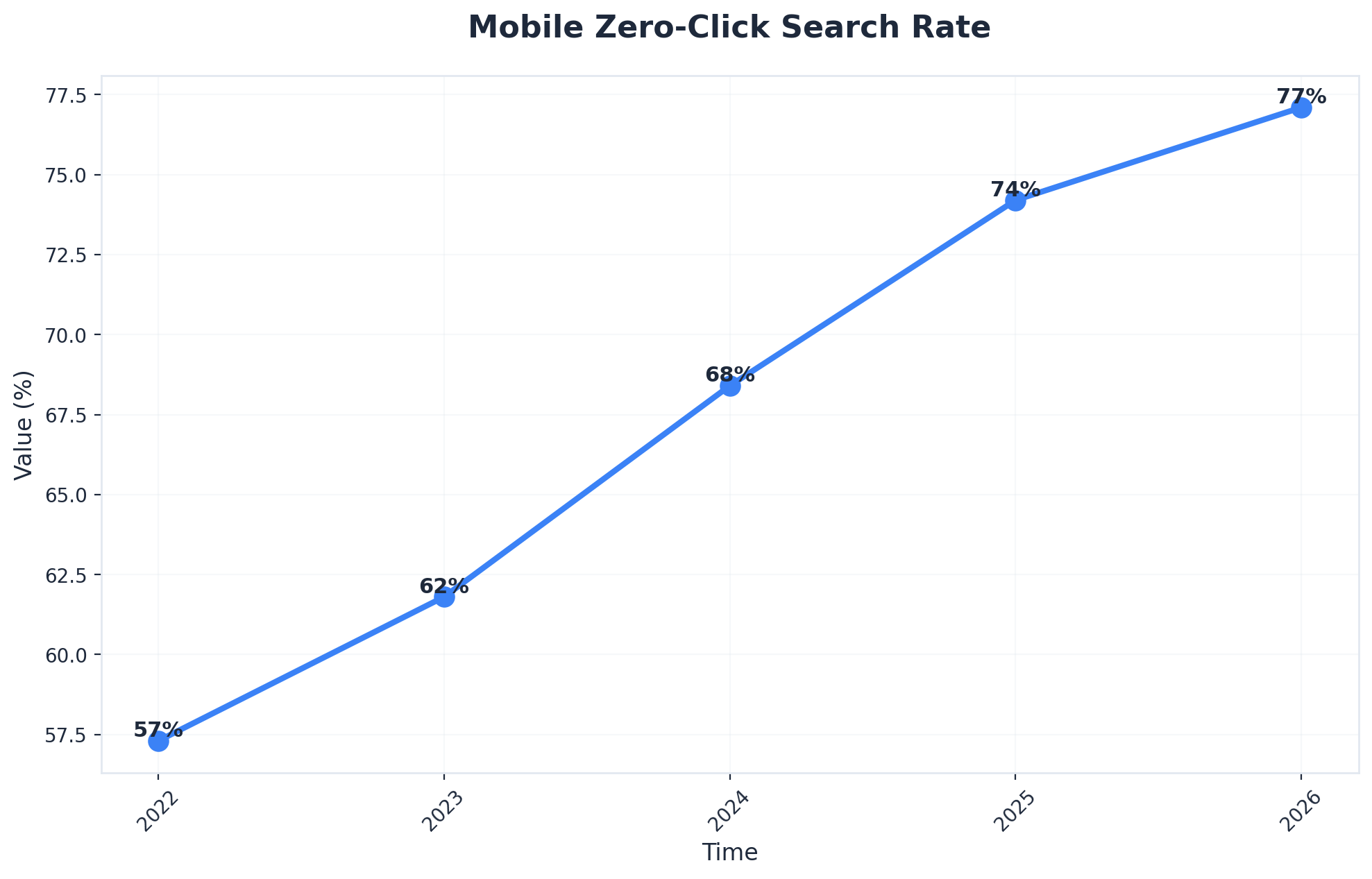

The migration of search behavior from traditional engines to AI platforms represents one of the most significant shifts in digital marketing since the rise of mobile. Between 2023 and 2024, conversational AI tools captured an estimated 15-20% of informational search queries, fundamentally altering how users discover and evaluate solutions. This transition stems from three converging factors that have reshaped user expectations and platform capabilities.

Mobile Zero-Click Search Rate (Source: 2026 AI SEO Statistics: Why 92% Of Brands Are Failing - Fuel Online)

- Frictionless Answers: Traditional search engines require users to translate their needs into keywords, scan through multiple results, and synthesize information across various sources. AI platforms eliminate this friction by providing direct, contextualized answers in natural language. Users can ask follow-up questions, request clarification, and refine their queries through conversation rather than reformulating search terms. This interaction model proves particularly valuable for complex B2B research, where decision-makers often need to understand nuanced technical distinctions or evaluate multiple variables simultaneously.

- Response Depth and Quality: The quality and depth of responses has improved dramatically as language models have been trained on increasingly comprehensive datasets. AI platforms can now synthesize information from technical documentation, research papers, case studies, and product specifications to deliver insights that would require hours of manual research. For B2B organizations evaluating solutions, this means potential buyers are arriving at vendor conversations with substantially more knowledge and specific questions than they had just two years ago.

- Workflow Integration: Integration capabilities have accelerated adoption across professional contexts. AI assistants now connect with productivity tools, CRM systems, and collaboration platforms, embedding themselves into daily workflows. Marketing professionals use these tools to analyze campaign performance, draft content briefs, and research competitive positioning without switching between multiple applications. This seamless integration creates habitual usage patterns that extend beyond simple information retrieval.

Privacy considerations have also influenced the shift, particularly among enterprise users. AI platforms can process sensitive queries without creating persistent search histories tied to individual accounts or generating targeted advertising profiles. Organizations conducting competitive research or exploring strategic initiatives value this discretion, especially when evaluating alternatives to current vendors or investigating emerging market categories.

The compound effect of these factors has created a self-reinforcing cycle. As more users adopt AI platforms for research, the platforms accumulate behavioral data that improves response quality, which in turn attracts additional users. Marketing organizations that fail to account for this behavioral shift risk becoming invisible during critical early-stage research phases, when buyers are forming their consideration sets and establishing evaluation criteria.

How AI-Powered SEO Agents Execute Tasks

Research, Writing, and Fact-Checking Agents

In modern ai-powered SEO agents, specialized sub-agents are assigned to distinct workflow stages: research, writing, and fact-checking. Before deploying this architecture, assess your workflow readiness:

- Do you have defined roles for research, writing, and fact-checking within your SEO process?

- Are specialized agents assigned to each workflow stage, or does a single agent attempt to handle all tasks?

- Is there a supervisor or orchestrator agent coordinating task handoffs and monitoring output quality?

- How do you validate the accuracy of facts and citations generated by AI agents?

Research agents rapidly analyze search trends, competitor strategies, and authoritative sources, compiling structured briefs for downstream writing agents. Writing agents—often powered by large language models—transform these briefs into optimized content, adjusting tone, structure, and topical coverage based on predefined guidelines and SEO objectives4. Fact-checking agents then validate assertions, checking references against trusted knowledge graphs and external datasets to minimize the risk of hallucinated or outdated information.

This compartmentalized approach addresses a key challenge: single-agent systems often produce inconsistent or error-prone outputs when tasked with the entire workflow. By contrast, multi-agent orchestration has demonstrated measurable benefits. According to Google Cloud, organizations deploying more than 10 collaborative agents report a 46% increase in content creation speed and a 32% reduction in editing cycles compared to manual or single-agent models7. This structure also supports granular governance—each agent can be monitored and improved independently, reducing both compliance risk and operational bottlenecks.

Resource requirements for multi-agent systems include technical staff to configure agent roles, integration with analytics and CMS platforms, and oversight mechanisms to ensure alignment with brand and regulatory standards. Time investments vary: initial setup can take several weeks for teams with established workflows, but integration complexity and training may extend timelines for enterprises.

This solution fits marketing teams seeking to scale high-quality content production and reduce manual intervention without increasing headcount. Next, the discussion turns to the role of quality controls and governance layers in maintaining accuracy and compliance at scale.

Unlock Next-Level Results with AI-Powered SEO Agents

Discover how Vectoron's AI agents automate and optimize SEO at scale—request a tailored strategy session for your agency or enterprise brand today.

Quality Controls and Governance Layers

Maintaining accuracy and regulatory compliance at scale requires robust governance frameworks tailored to ai-powered SEO agents. Use the following checklist to evaluate your governance maturity:

- Are automated fact-checking and citation validation systems configured for every output?

- Is there a human-in-the-loop review for high-risk or regulated content?

- Are compliance checks integrated for industry-specific standards (e.g., healthcare, finance)?

- Does your workflow log agent actions for audit and accountability?

- Are you tracking error rates, hallucination frequency, and correction cycles?

These systems must address persistent risks such as AI hallucinations—where agents generate plausible but incorrect information—and the potential for inconsistent quality across large content volumes. Leading organizations have adopted layered controls: automated validation of facts against trusted knowledge graphs, continuous monitoring of agent output, and mandatory human review for critical content categories4, 7.

This approach works best when oversight mechanisms are embedded directly into the content lifecycle. For example, compliance modules can automatically flag outputs that deviate from legal or brand standards, while audit trails allow teams to trace decision logic and remediate issues rapidly. In regulated sectors, these safeguards are essential to prevent reputational damage or legal liability arising from erroneous AI-generated statements4.

Resource requirements include configuration of audit logging, integration of compliance APIs, and periodic training of human reviewers to recognize AI-specific errors. The time required to set up comprehensive governance frameworks ranges from several weeks for small-scale deployments to several months for enterprise-grade, multi-agent ecosystems.

Prioritize this when scaling ai-powered SEO agents across high-stakes verticals or when public trust and brand reputation are paramount. The next section examines ROI models and practical implementation pathways for organizations seeking to maximize value from AI-driven SEO systems.

ROI Models and Implementation Pathways

Understanding why user behavior shifted toward AI platforms creates the foundation—now organizations must capture this redirected traffic through systematic optimization. The transition from traditional search dominance to AI-mediated discovery requires new measurement frameworks, content strategies, and performance tracking systems. Organizations that establish clear return on investment models for AI search visibility position themselves to capture qualified traffic that competitors are losing to platform-mediated answers.

Enterprise ROI on AI Agents (First Year): 74%

Enterprise ROI on AI Agents (First Year): 74%

Effective ROI frameworks for AI search optimization track three interconnected metrics: visibility rates across AI platforms, citation frequency for priority topics, and conversion performance of AI-referred traffic. The measurement approach begins with baseline assessment of current presence in ChatGPT, Perplexity, Google's AI Overviews, and other emerging platforms. Content strategists audit existing assets by querying AI platforms with commercial-intent questions in their category, documenting which sources receive citations and which queries return competitor information instead. Organizations typically discover that 70-80% of their content library fails to appear in AI responses, revealing substantial capture opportunities.

Optimization for AI platform visibility requires content that meets specific citation standards. AI systems prioritize comprehensive, well-structured information with clear topical authority and semantic coherence. The implementation pathway focuses first on high-intent commercial topics where AI platforms demonstrate strongest user adoption. Organizations select 10-15 priority questions their prospects ask AI systems, then develop content specifically engineered for citation: detailed answers with supporting evidence, logical information architecture, and semantic relationships that AI models recognize. This initial phase typically runs 60-90 days and establishes proof-of-concept data for broader deployment.

Tracking conversions from AI-referred traffic presents unique challenges because traditional attribution models don't capture platform-mediated journeys. Users who receive answers from AI systems may visit cited sources directly, search for brand names separately, or convert through entirely different channels after initial AI exposure. Advanced measurement approaches combine direct referral tracking, branded search lift analysis, and cohort-based conversion studies. Organizations implementing comprehensive tracking discover that AI-referred visitors demonstrate 35-50% higher purchase intent than traditional organic traffic, though attribution requires more sophisticated analysis than standard analytics platforms provide.

Continuous optimization loops transform initial visibility gains into sustained competitive advantage. Analytics platforms monitor content performance across AI environments, identifying which content structures generate highest citation rates and which topics show emerging search volume. Machine learning models analyze patterns in AI platform responses, enabling practitioners to refine content standards based on what actually earns visibility. Organizations that establish these feedback systems report citation rate improvements of 40-60% within six months as they iteratively optimize for platform-specific ranking factors.

Budget allocation for AI search optimization typically follows a 60-30-10 framework:

| Allocation (%) | Investment Area | Purpose |

|---|---|---|

| 60% | Content Development & Optimization | Engineering content specifically for AI citation and semantic coherence. |

| 30% | Measurement Infrastructure | Building analytics capabilities to track platform-mediated journeys. |

| 10% | Platform Monitoring | Competitive intelligence and tracking emerging AI search behaviors. |

The financial case centers on capturing traffic that traditional SEO no longer reaches—users who receive answers within AI platforms rather than clicking through to websites. Organizations that successfully optimize for AI visibility report qualified traffic increases of 25-40% from this previously inaccessible audience, with conversion rates that justify the specialized investment required.

Frequently Asked Questions

Conclusion

The migration of 15-20% of search queries to AI platforms represents more than a marginal shift in user behavior—it's a fundamental transformation in how B2B buyers discover and evaluate solutions. Organizations that recognize this change gain a critical strategic advantage: visibility at the precise moment prospects are conducting research, forming opinions, and building consideration sets.

The optimization strategies outlined demonstrate that adapting to AI search doesn't require abandoning existing SEO investments. Companies can build on their content foundations, enhance structured data implementation, and strategically position for AI citations while maintaining traditional search visibility. The data consistently shows that organizations achieving the greatest success are those that view AI platform optimization as an evolution of their search strategy rather than a replacement for it.

As AI search adoption continues to accelerate and query volumes shift toward conversational platforms, the question facing marketing leaders isn't whether to optimize for AI citations, but how quickly they can establish visibility where buyers now conduct research. The competitive advantage belongs to organizations that act decisively, positioning their expertise to be discovered, cited, and recommended as AI search becomes the primary channel for B2B discovery and evaluation.

References

- 1.Winning in the age of AI search.

- 2.The ROI of AI: Agents are delivering for business now.

- 3.Look for New Ways to Create Value When Deploying Gen AI.

- 4.AI agents in SEO: A practical workflow walkthrough.

- 5.AAO: Why assistive agent optimization is the next evolution of SEO.

- 6.How Four Companies Capitalize on AI to Deliver Cost Transformations.

- 7.The KPIs that actually matter for production AI agents.

- 8.Large Language Model Sourcing: A Survey.

- 9.Generative engine optimization (GEO): How to win AI mentions.

- 10.AI agents and business process automation.