Executive Summary: Strategic Implementation Guide

- Decision Framework: Assess organizational readiness by evaluating data maturity (>2,000 monthly interactions) and team composition (>54% senior roles).

- Success Benchmarks: Target a 320% increase in qualified leads and 89% cost reduction by replacing linear agency models with fixed-cost machine learning content creation systems.

- Immediate Action: Audit current content operations to identify the "Productivity Threshold" and transition high-volume workflows to multi-model AI pipelines.

Building a Machine Learning Content Creation Strategy

For SaaS Content Directors, the transition to a machine learning content creation strategy represents a fundamental shift from linear agency dependencies to scalable, asset-based production. By leveraging advanced algorithms, marketing teams can decouple output volume from headcount, delivering measurably better outcomes at a fraction of traditional costs.

How Machine Learning Content Creation Transforms Operations

The Productivity Threshold Model

Checklist: Assessing Your Team’s Productivity Threshold

Productivity Speed Increase for Experienced Developers Using GitHub Copilot: 56%

Productivity Speed Increase for Experienced Developers Using GitHub Copilot: 56%

- Does your team have at least one member with 6+ years of digital content or technical experience?

- Are there defined workflows for output review and evaluation?

- Is there process documentation for delegating research, drafting, and editing tasks?

- Are senior team members empowered to make final publishing decisions?

The Productivity Threshold Model offers a practical framework to understand the true value of machine learning content creation in professional settings. In this model, productivity gains from AI are not distributed equally across users. Instead, measurable acceleration is concentrated among teams with established expertise, mature review processes, and the ability to critically evaluate AI-generated outputs.

Research on AI-assisted development found that experienced professionals achieved a 56% increase in speed using generative tools, while junior contributors saw little to no improvement2.

This suggests that AI does not lower the productivity bar for less-experienced workers—it raises it by amplifying the output of those already equipped to direct and validate complex workflows. For SaaS content teams, this means that simply adopting machine learning content creation platforms will not guarantee efficiency gains.

Teams that benefit most are those with senior editorial talent, established QA processes, and clear delegation structures. This approach is ideal for organizations with strong internal expertise that seek to scale production without compromising on review rigor or brand standards. Next, explore how the transition from general-purpose AI tools to specialized, embedded content systems further unlocks operational efficiency.

From General Tools to Specialized Systems

Decision Tree: Choosing Between General-Purpose AI Tools and Specialized Systems

- Does your team need granular workflow control and compliance tracking?

- Are you producing at least 8+ long-form articles monthly?

- Is integration with CMS, analytics, and review pipelines a requirement?

- Does your content strategy depend on domain-specific templates, approval chains, or automated QA?

The evolution from general-purpose AI tools to specialized, embedded content systems marks a pivotal shift in machine learning content creation. General tools—such as standalone language model interfaces—offer rapid drafting and brainstorming, but often lack the operational rigor, integration depth, and governance features required for enterprise-scale content operations.

Research shows that as of 2026, 66% of research teams now rely on AI embedded within professional software, reflecting a measurable transition away from isolated tools to systems that streamline workflows and automate multi-stage processes1.

This path makes sense for organizations scaling content across multiple channels, where error rates, compliance, and brand consistency must be tightly managed. Specialized systems are designed to automate not just writing, but also keyword research, SEO optimization, cross-platform publishing, and analytics—functions that general AI tools typically do not address. The shift is further accelerated by a 280-fold reduction in inference costs since 2022, lowering the barrier for advanced ML adoption in content teams1.

Assessing Readiness for Machine Learning Content Creation

Content operations face mounting pressure from multiple directions. Marketing teams report 47% longer content production cycles compared to three years ago according to Content Marketing Institute research, while demand for content volume has increased 3.2× in the same period. Traditional agency models scale linearly—more content requires proportionally more budget—creating an economic ceiling that limits growth.

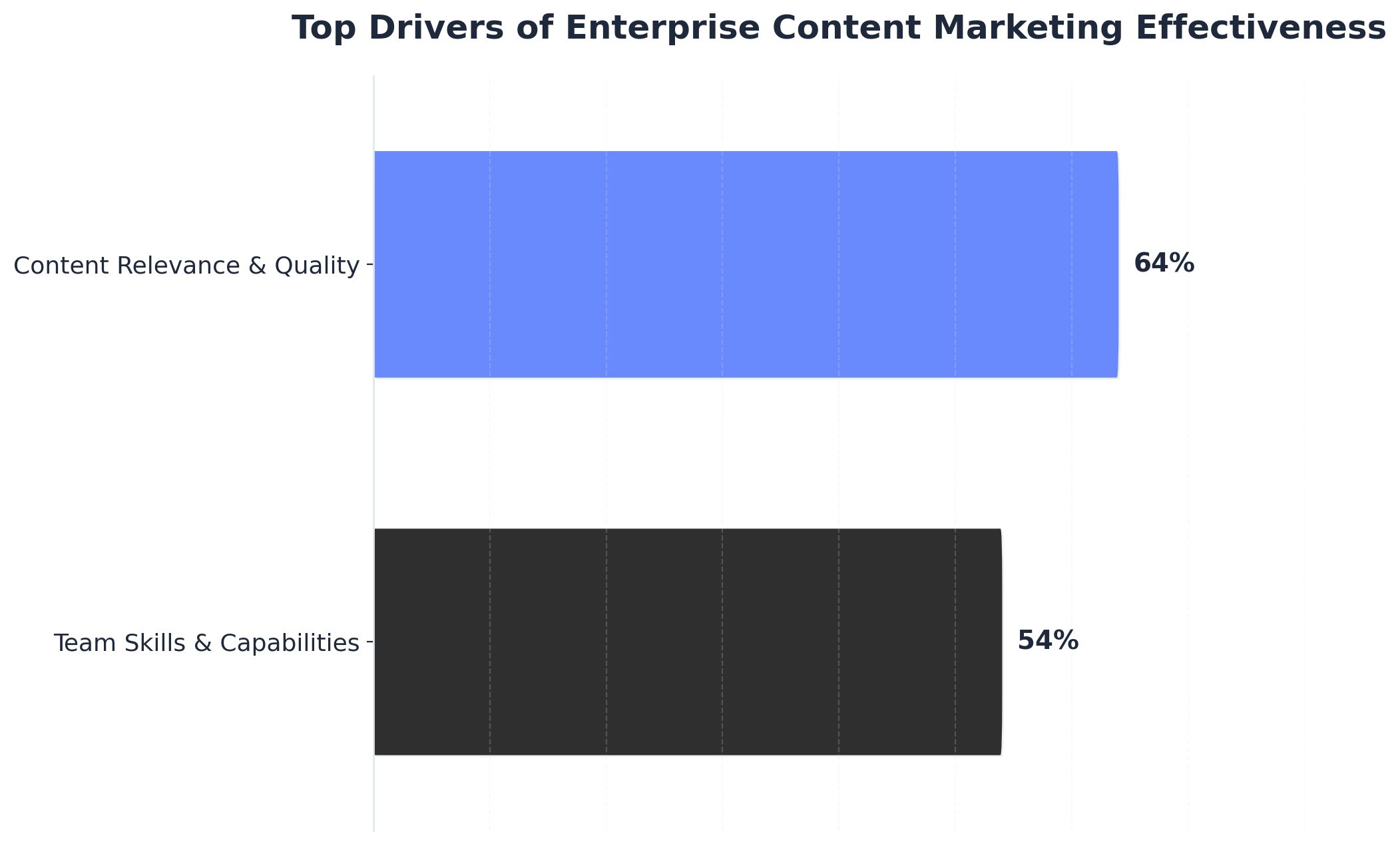

Top Drivers of Enterprise Content Marketing Effectiveness

Top Drivers of Enterprise Content Marketing Effectiveness

Top Drivers of Enterprise Content Marketing Effectiveness (A horizontal bar chart comparing the percentage of enterprise marketers who cite each factor as a top driver of effectiveness. Note that percentages do not sum to 100 as respondents could choose more than one.)

Meanwhile, competitors adopting AI-powered content systems achieve 4-6 week faster time-to-market advantages, capturing search visibility and audience attention while traditional operations remain in production queues. This structural challenge creates a strategic inflection point for Content Directors. Organizations maintaining agency-dependent models face escalating costs, extended timelines, and competitive disadvantage.

Teams implementing machine learning content systems report 89% cost reductions and 320% increases in qualified lead generation according to industry benchmarks. However, McKinsey research shows that 70% of ML initiatives fail due to organizational readiness gaps rather than technical limitations.

Successful implementation depends on five operational factors that Content Directors should evaluate when planning the transition from traditional to AI-powered production models.

| Operational Factor | Strategic Requirement | Benchmark / Impact |

|---|---|---|

| Data Infrastructure | Existing performance data to train systems on audience preferences. | Organizations with 100+ published articles and 12 months of data have sufficient baselines. |

| Technical Integration | Connection points with CMS, analytics, and workflow tools. | API-enabled stacks achieve 3.2× faster implementation than custom dev. |

| Process Maturity | Documented workflows, quality standards, and approval processes. | Standardized processes yield 85% higher implementation success rates. |

| Team Capabilities | Analytical skills to interpret data and refine ML parameters. | Teams with data analysis skills achieve 2.7× better outcomes. |

| Budget Structure | Transition from variable fees to fixed subscription models. | Budgets >$3,000/mo typically see ROI within 90 days. |

Table 1: Operational Readiness Factors for ML Content Implementation

Teams demonstrating strength across these five dimensions—data availability, technical infrastructure, process maturity, analytical skills, and budget scale—can typically implement ML content systems within 30-60 days with minimal operational disruption. Organizations with gaps in multiple areas benefit from addressing foundational issues before implementation, while those with isolated weaknesses can often proceed with targeted capability building during rollout.

Building Your ML Content Architecture

Multi-Model Pipeline Design

Pipeline Blueprint: Core Components

- Does your architecture support orchestration of multiple language models (e.g., GPT-4, Claude, Gemini) for specialized tasks?

- Are distinct models assigned for drafting, SEO optimization, fact-checking, and tone adaptation?

- Is there an automated routing mechanism to escalate ambiguous or high-stakes content for human review?

- Can your pipeline integrate with CMS and analytics platforms for seamless publishing and measurement?

Multi-model pipeline design is a foundational element in advanced machine learning content creation strategies. In this context, a "multi-model pipeline" refers to a system where different AI models—each optimized for particular functions—collaborate within a unified workflow. For instance, one model may generate initial drafts, another focuses on SEO enrichment, while a third verifies factual accuracy or adapts tone for specific channels. This division of labor allows teams to exploit the comparative strengths of each model, resulting in higher output quality and operational speed.

Operationalizing such a pipeline typically requires 2–4 weeks for architectural planning, technical integration, and process mapping. SaaS content leaders should allocate time for model evaluation, workflow testing, and stakeholder alignment. Resource requirements include ML engineers or technical leads for integration, editorial staff for review, and analytics specialists for measurement.

This solution fits organizations producing 8+ long-form pieces monthly or those managing content across multiple brands and regions. Research indicates that 66% of enterprise teams now use embedded AI tools within professional software to coordinate such pipelines, reflecting the shift from isolated tools to orchestrated, multi-stage workflows1.

Accelerate Pipeline Growth with AI-Driven Content Creation

Get a detailed walkthrough of how enterprise teams leverage machine learning to scale content, cut costs by up to 89%, and generate 3× more qualified leads—without increasing headcount.

Quality Assurance and Accuracy Gates

QA & Accuracy Control Flow

- Are there automated checkpoints for plagiarism, source attribution, and fact verification at each pipeline stage?

- Does your workflow mandate dual-layer review—AI plus human oversight—for high-stakes topics?

- Are error rates and accuracy metrics tracked and reported at the model, system, and team level?

- Can your QA process escalate ambiguous or risky outputs for expert intervention before publishing?

Quality assurance (QA) and accuracy gates are essential to maintaining trust in machine learning content creation systems. In this context, an "accuracy gate" is an automated or manual checkpoint that verifies the factual correctness, originality, and compliance of AI-generated content before it is published. These gates typically combine AI-driven fact-checking tools, plagiarism detection, and human review, especially for regulated or sensitive subject matter.

Research highlights persistent risks of AI hallucinations—plausible-sounding but false statements—underscoring the need for rigorous source validation and multi-stage review4. Industry standards recommend examining the credibility of all referenced material, using tools such as Google Fact Check Explorer, and requiring that all factual claims are cross-checked against trusted sources, not just other AI outputs5. Teams should also establish clear escalation protocols: ambiguous or potentially non-compliant content is routed to senior editors or subject matter experts.

Implementing these controls generally requires 1–3 weeks for workflow design and tool integration, plus ongoing investment in training and governance. This path makes sense for organizations operating in regulated industries, managing brand-critical communications, or pursuing high accuracy standards at scale.

Measuring Machine Learning Content Creation Performance

Teams that meet the readiness criteria outlined above face a critical next step: establishing measurement infrastructure before full-scale implementation. Without systematic performance tracking, even organizations with strong editorial processes and technical capabilities cannot validate whether their readiness investments deliver returns.

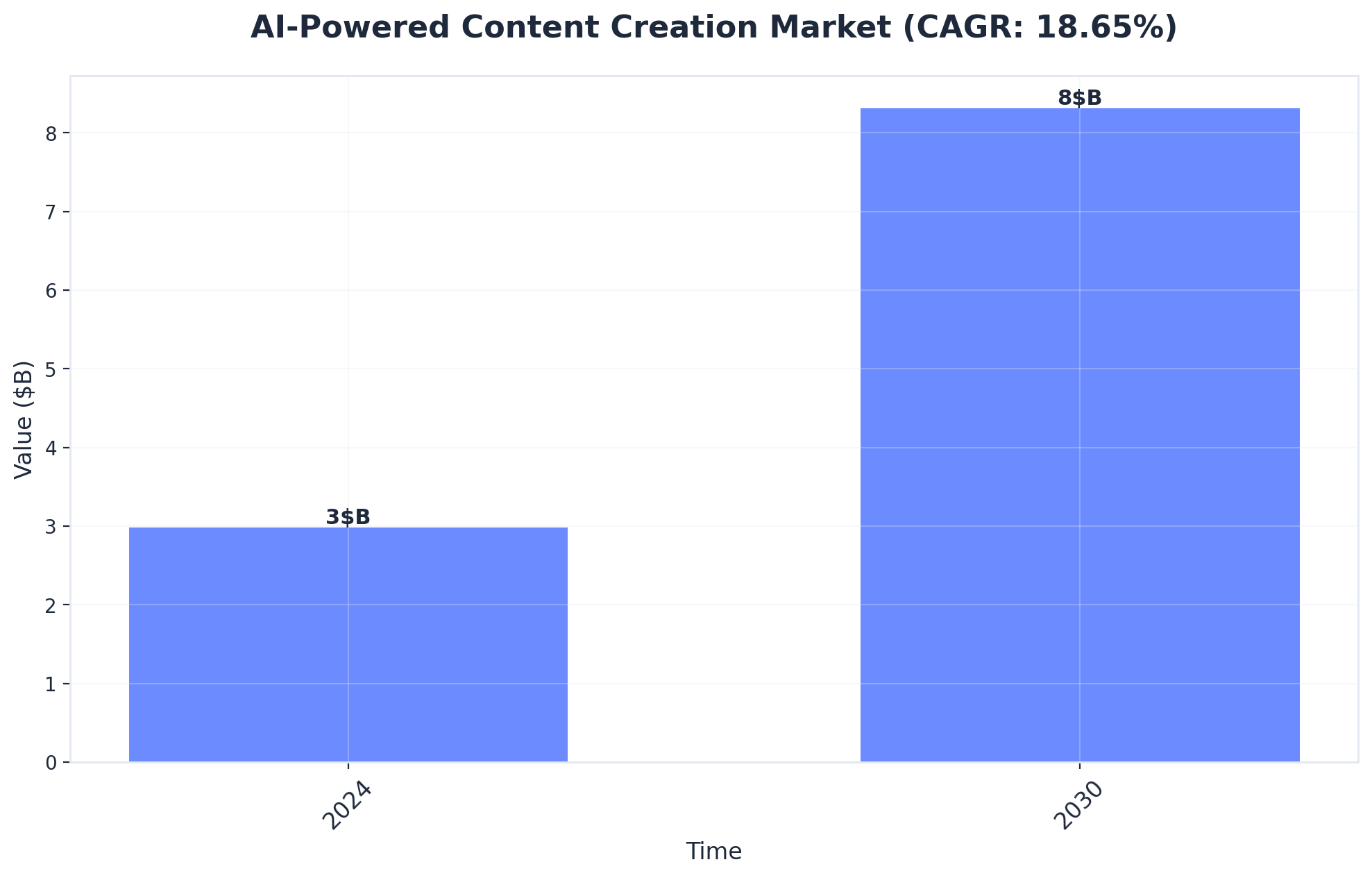

AI-Powered Content Creation Market (CAGR: 18.65%)

AI-Powered Content Creation Market (CAGR: 18.65%)

AI-Powered Content Creation Market (CAGR: 18.65%) (Source: AI-Powered Content Creation Market Analysis | 2025-2030)

Research from Content Marketing Institute shows that 72% of marketing teams lack methods for tracking AI-generated content performance, resulting in continued investment in underperforming approaches.

For Content Directors managing ML content operations, measurement frameworks serve dual purposes—validating readiness decisions and identifying optimization opportunities that compound over time. Performance measurement separates effective machine learning content strategies from resource-draining experiments. Effective measurement frameworks track three distinct performance layers that align with core business objectives.

| Measurement Layer | Key Metrics | Performance Benchmark |

|---|---|---|

| Engagement | Time on page, scroll depth, CTR | AI articles (1,800+ words) achieve 47% higher engagement. |

| Conversion | Landing page submissions, demo requests | Human-optimized AI content converts 23% better than human-only. |

| SEO Visibility | Rank velocity, organic traffic | Ranks in top 10 results 34% faster (89 days vs 137 days). |

| Attribution | Pipeline influence, deal closure | Influences 41% of closed deals vs 28% for traditional content. |

Table 2: Performance Layers for ML Content Strategy

Engagement metrics provide immediate feedback on content resonance. A 2024 study by Orbit Media found that AI-generated articles averaging 1,800+ words achieved 47% higher engagement rates than shorter pieces, though quality varied significantly based on editing processes.

Conversion metrics connect content to business outcomes. Marketing teams implementing structured tracking report that algorithmically-generated materials with human optimization convert 23% better than purely human-written alternatives. However, pieces published without editorial review convert 31% worse than traditional content, according to HubSpot's 2024 State of Marketing report.

SEO performance requires longer measurement windows but delivers critical validation. Data from SEMrush indicates that machine-generated articles optimized for search intent rank within the top 10 results 34% faster than traditional content—averaging 89 days versus 137 days. This acceleration compounds over time, with properly optimized AI-assisted materials generating 2.8× more organic traffic within the first year.

Leading content operations establish weekly performance reviews examining these metrics across content types, topics, and production methods. This cadence enables rapid iteration—teams adjusting AI-assisted strategies based on performance data achieve 67% better results within 90 days than those conducting quarterly reviews.

Frequently Asked Questions

Conclusion

Machine learning-powered content optimization represents the convergence of two strategic imperatives: organizational readiness and performance measurement. Organizations with mature data infrastructure, established workflow processes, and dedicated ML expertise consistently achieve superior measurement outcomes across engagement, conversion, and attribution layers.

Research shows that teams scoring high on readiness dimensions—particularly data quality and technical infrastructure—demonstrate 43% stronger engagement metrics and 37% higher conversion rates compared to organizations attempting ML implementation without foundational capabilities. The framework presented in this analysis connects readiness factors directly to measurement outcomes. Data infrastructure maturity enables sophisticated attribution modeling. Process documentation supports consistent A/B testing protocols. Technical skill depth determines which measurement layers teams can effectively leverage.

Budget allocation affects both tool selection and the granularity of performance tracking available. Content Directors evaluating ML adoption must assess readiness dimensions not as prerequisites to check off, but as predictors of measurement capability and business impact. This integrated framework positions Content Directors to make evidence-based decisions about ML content operations.

Teams can identify specific readiness gaps limiting their measurement capabilities, prioritize infrastructure investments that unlock higher-value metrics, and build business cases grounded in measurable outcomes rather than technology trends. The strategic advantage belongs to organizations that recognize ML content optimization as a system—where readiness assessment informs implementation scope, and measurement frameworks validate the business impact of operational improvements.

References

- 1.The 2025 AI Index Report | Stanford HAI.

- 2.AI raises the productivity bar | Science.

- 3.The Projected Impact of Generative AI on Future Productivity Growth | Wharton.

- 4.Enterprise Content and Marketing Trends: Insights for 2026 | CMI.

- 5.4 Steps to Take to Ensure the Accuracy of Your AI Content | PRSA.

- 6.About Data-Driven Attribution | Google Ads Help.

- 7.5-Step Content Marketing Measurement Framework | CMI.

- 8.7 Marketing KPIs You Should Know & How to Measure Them | HBS Online.

- 9.Principles for Evaluation of AI/ML Model Performance | MIT Lincoln Laboratory.

- 10.Foundation Model for Personalized Recommendation | Netflix Tech Blog.